Two Wrong Answers: How Defense Firms Are Getting AI Cyber Risk Backwards

The two biggest cyber risks of AI in litigation defense are doing nothing and doing it yourself

Andy Anderson · May 2026

On a spring morning in 2025, a man in a polo shirt walked into the lobby of an American law firm, said he was from IT, and was waved upstairs. He plugged a small drive into a partner's workstation, copied four terabytes of client matter, and walked out. The FBI calls his employers the Silent Ransom Group; the criminals themselves prefer Luna Moth. The firm shortly received a polite extortion note. [1]

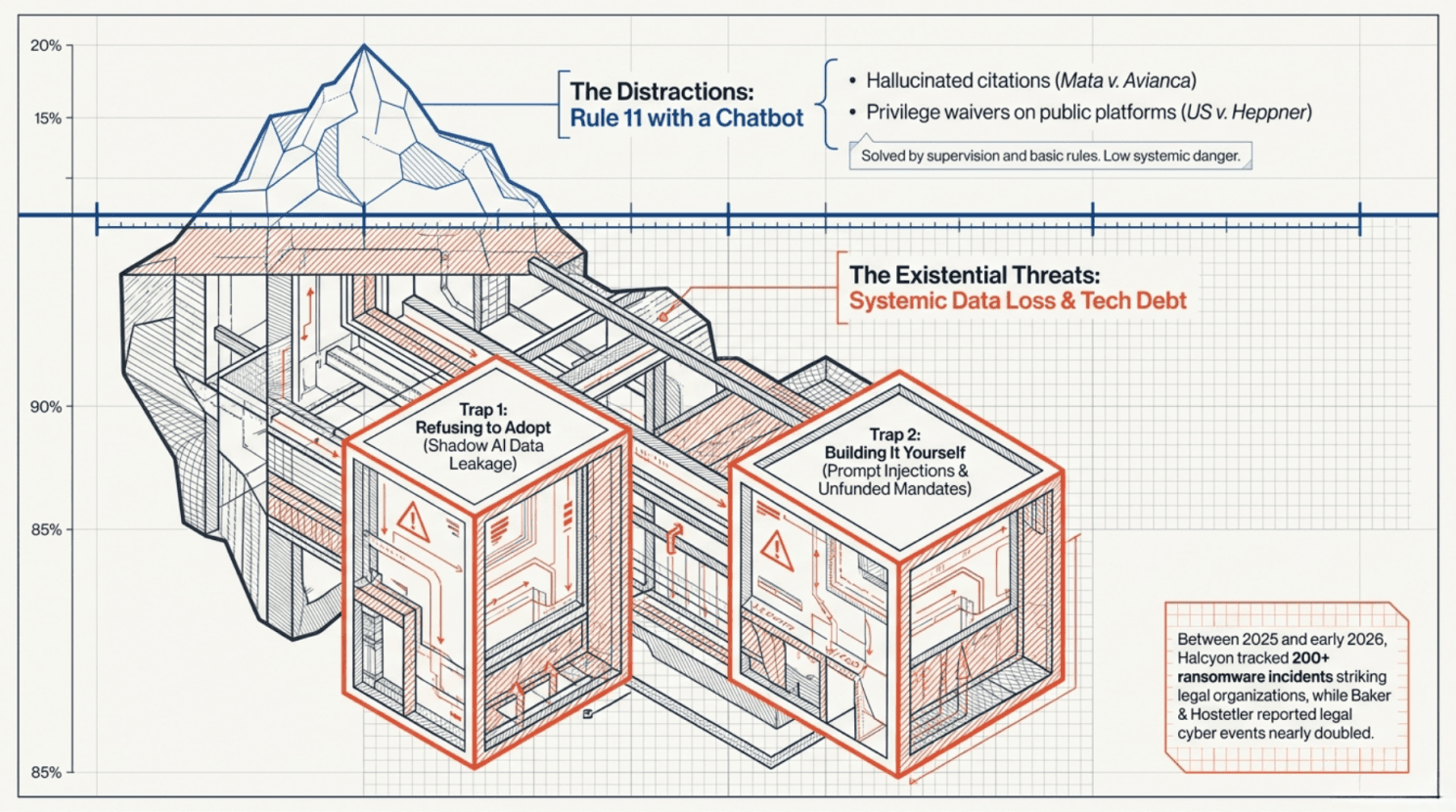

It was not, as such things go, an unusually bad month. Halcyon counts more than two hundred ransomware incidents striking legal organizations between 2025 and early 2026. [2] Baker & Hostetler, whose digital-incident response team handled 1,250 cyber events last year, reports that the number involving law firms nearly doubled. [3]

Onto this terrain, generative AI has arrived bearing two apparent gifts: a new layer of cyber risk for managing partners to worry about, and a thriving consultancy industry to charge them for worrying about it. Hallucinated citations, privilege waivers, rogue chatbots — these have become standard fare at every legal-tech conference, and most managing partners now leave with the same takeaway: AI is, on balance, a liability to be managed.

This is precisely backwards. The two largest AI-related cyber risks for a defense firm in 2026 are, in order, refusing to adopt the technology at all and trying to build it yourself.

The Argument So Far

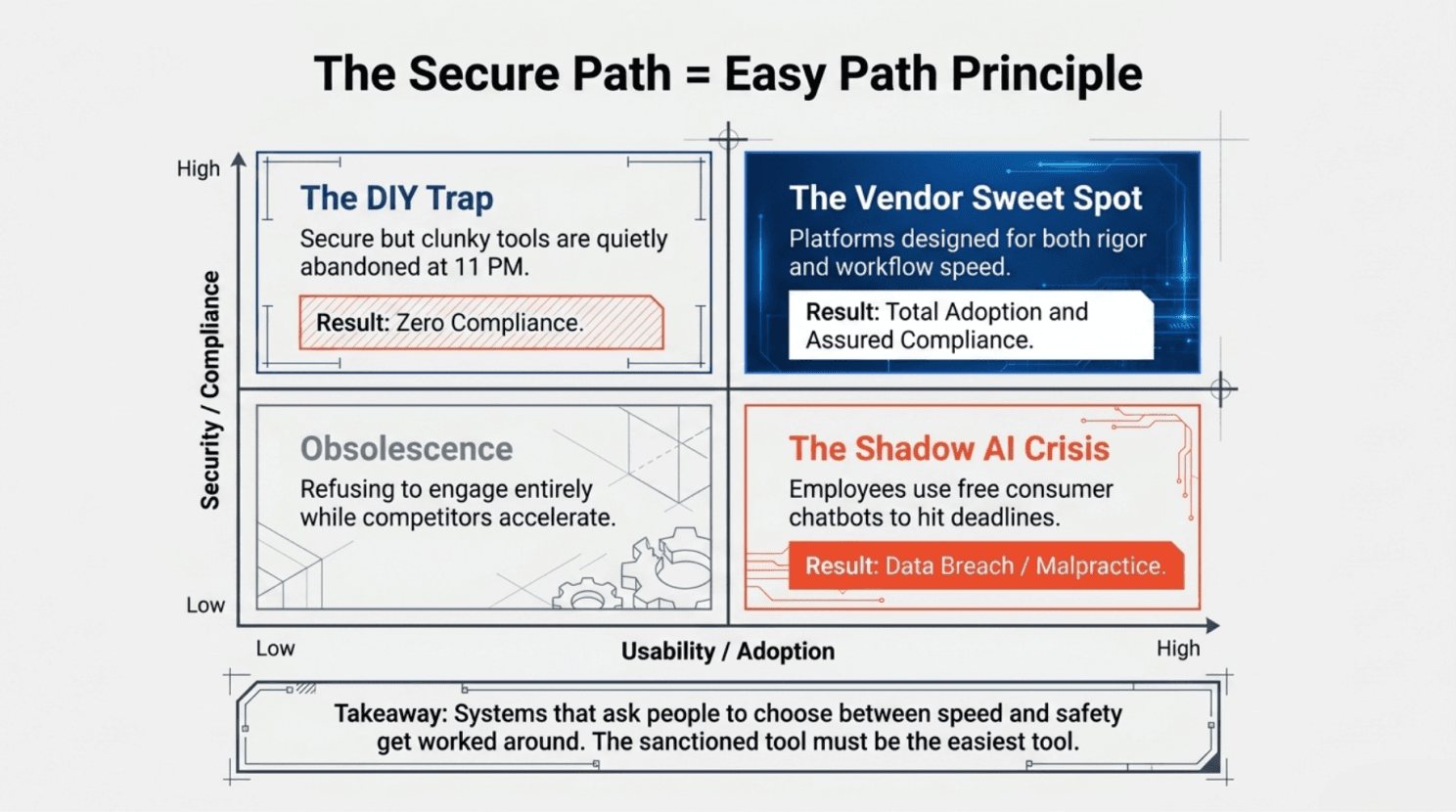

This is the third piece in a series. The first argued that the financial prize for getting AI right in litigation defense savings, win-rate gains, market share — is large enough to redraw the competitive map of the industry. The second argued that the firms that capture that prize will be those that make the secure way of doing things also the easiest way. Systems designed so that the right choice is the path of least resistance get operated as designed. Systems that ask people to choose between speed and safety get worked around.

This piece is about the cyber question that follows from both: whether the path to AI runs through the firm's own IT department, or through someone else's. There is, on inspection, only one defensible answer.

The real risks are less visible and less discussed and thus even more dangerous

The Wrong Conversation

It will help to clear the deck of the loud risks first, since they are the ones managing partners hear about most.

Steven Schwartz's name has, since May 2023, become shorthand for AI gone wrong in legal practice. The New York attorney filed an opposition brief in Mata v. Avianca, citing six federal cases that did not exist; ChatGPT had invented them, and confirmed they were real when asked. [4] Judge P. Kevin Castel, less easily reassured, fined Schwartz, his colleague, and their firm $5,000 each. Subsequent sanctions cases, Johnson v. Dunn in the Northern District of Alabama, are the cleanest recent examples that have enforced the same lesson: the lawyer who signs the brief is responsible for everything in it. [5] These are not new principles. They are Rule 11 with a chatbot.

The more recent panic, around United States v. Heppner, has been similarly overstated. Judge Jed Rakoff held in February 2026 that exchanges between a criminal defendant and the consumer version of Anthropic's Claude were not protected by attorney-client privilege — but the defendant had used a public platform on his own initiative, and the platform's terms of service explicitly disclaimed confidentiality. [6] A week earlier, in Warner v. Gilbarco, the Eastern District of Michigan held that work-product protection did apply to AI-assisted drafting, because the doctrine protects litigation thinking regardless of medium. [7] Read together, the cases simply confirm what privilege law has held for decades, going back to Kovel: who uses the tool, at whose direction, for what purpose, and whether confidentiality is preserved are the questions that matter. The technology is new; the framework is not.

These risks deserve attention. They do not deserve the panic. The firms making serious AI cyber mistakes in 2026 are mostly making different ones.

Banning a tool that makes the job fundamentally easier for overworked people is a recipe for creating rule breakers

The First Mistake Is Not Adopting It

Begin with the firms that have decided AI is too dangerous to use.

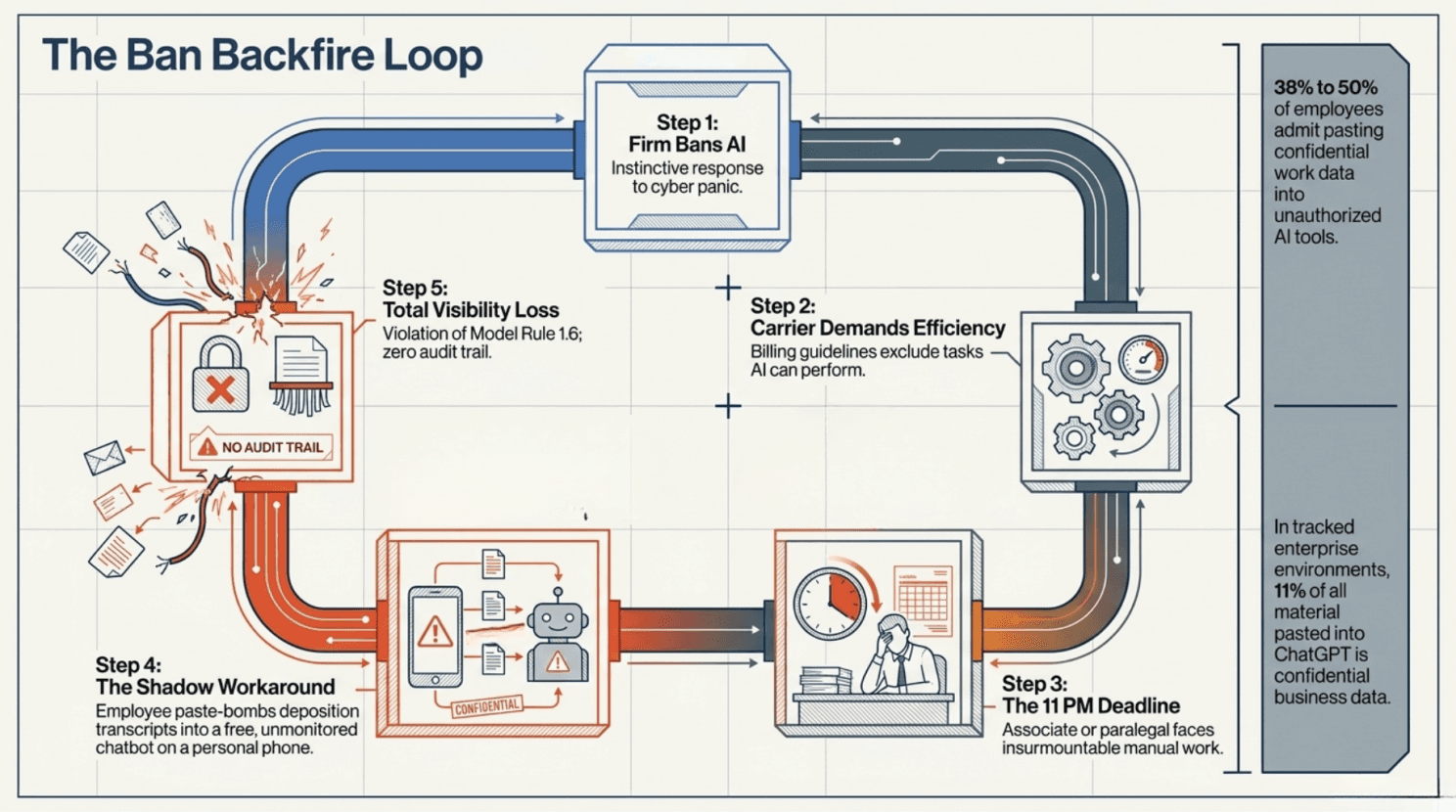

They have done so, in most cases, without realizing that their staff has already decided otherwise. Recent surveys put the share of employees who admit to pasting confidential work data into AI tools without authorization between 38% and just under half. [8] In one tracked enterprise environment, 11% of all material pasted into ChatGPT was confidential business data, source code, financial records, and client information. [9] By mid-2025, leaks tied to unauthorized use of generative AI had become a leading category of insider data loss.

What this looks like inside a defense firm is depressingly familiar. An associate, three nights into a brief, pastes a long deposition transcript into a free chatbot to summarize it. A paralegal uses an unvetted transcription service to convert a privileged client call into editable text. A partner uploads opposing counsel's expert report to a generic chatbot to "see what it thinks." All three happen, all three violate Model Rule 1.6, and none of them generates any audit trail at all.

The instinctive response to ban it does not work. AI is now embedded in Microsoft 365, Westlaw, and Lexis; new associates are learning to use it in law school. Carriers are rewriting their billing guidelines to exclude time spent on tasks AI can perform, and signaling that they will favor firms that have adopted sanctioned tools. [10] A firm that bans AI succeeds only in driving its use onto unmonitored personal devices, where the data-leakage problem is at its most severe and the firm's visibility at its least. The principle from the prior piece in this series applies directly: the firm's sanctioned tool must be the easiest, not the most virtuous. The choice is not whether your people will use AI; it is whether the version they reach for is one the firm has chosen.

There is also a doctrinal point here that the bar associations have begun to make with some force. ABA Model Rule 1.1, since the 2012 amendment to Comment 8, has explicitly required lawyers to keep abreast of "the benefits and risks associated with relevant technology." Most state bars have adopted some version of it. A managing partner who has decided that the firm will simply not engage with generative AI is making a defensible bet about the technology and an indefensible bet about the rule.

Building prototypes is easy, dependable products much less so

The Second Mistake Is Building It Yourself

The firms that have decided to engage with AI now confront a more interesting question: build or buy.

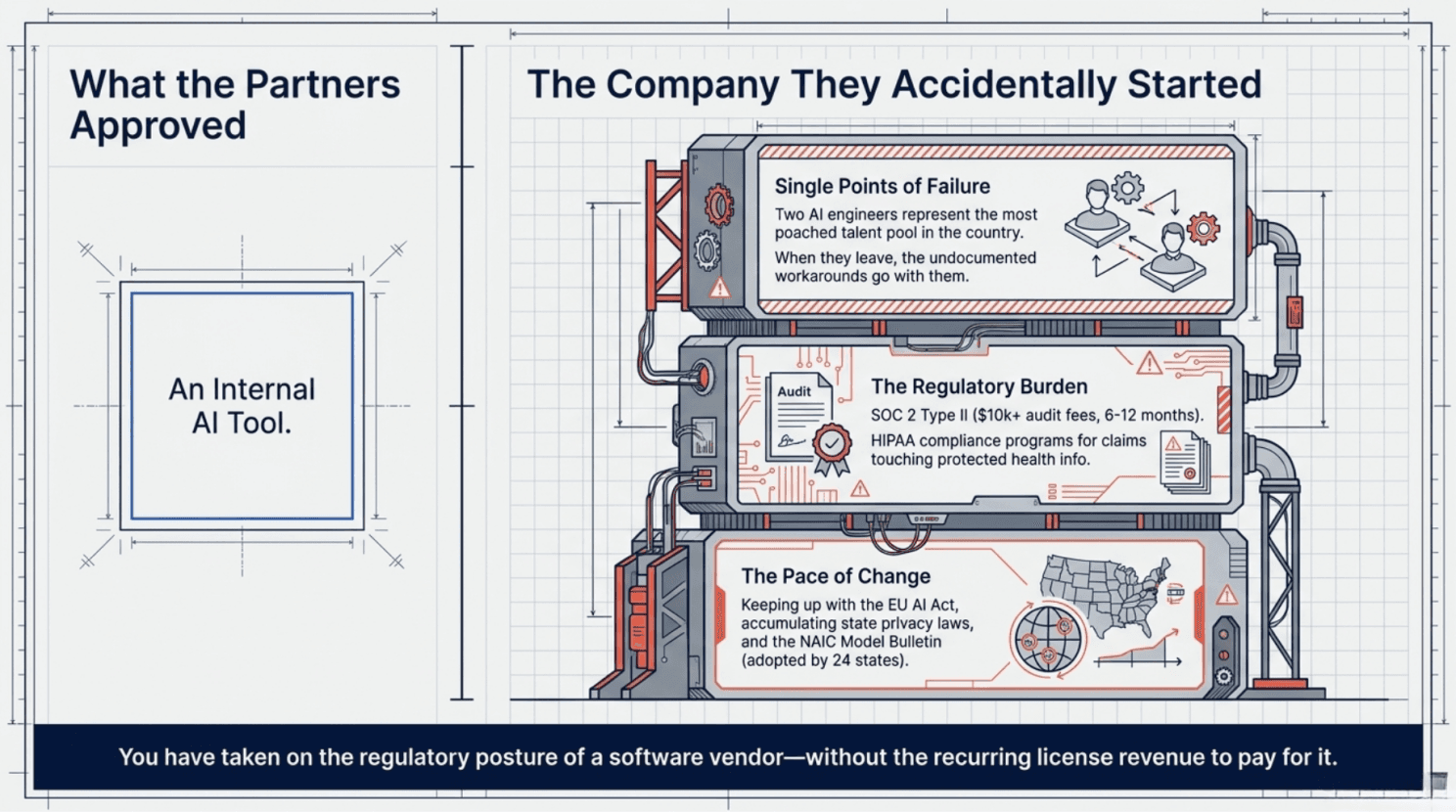

Some of the larger firms, and a surprising number of mid-size ones, have concluded that the answer is to build. The reasoning is intuitive. We have smart people. We have IT. We have specific workflows nobody else understands. Why pay a vendor a recurring license fee for something we could do in-house, with full control over the data and the model?

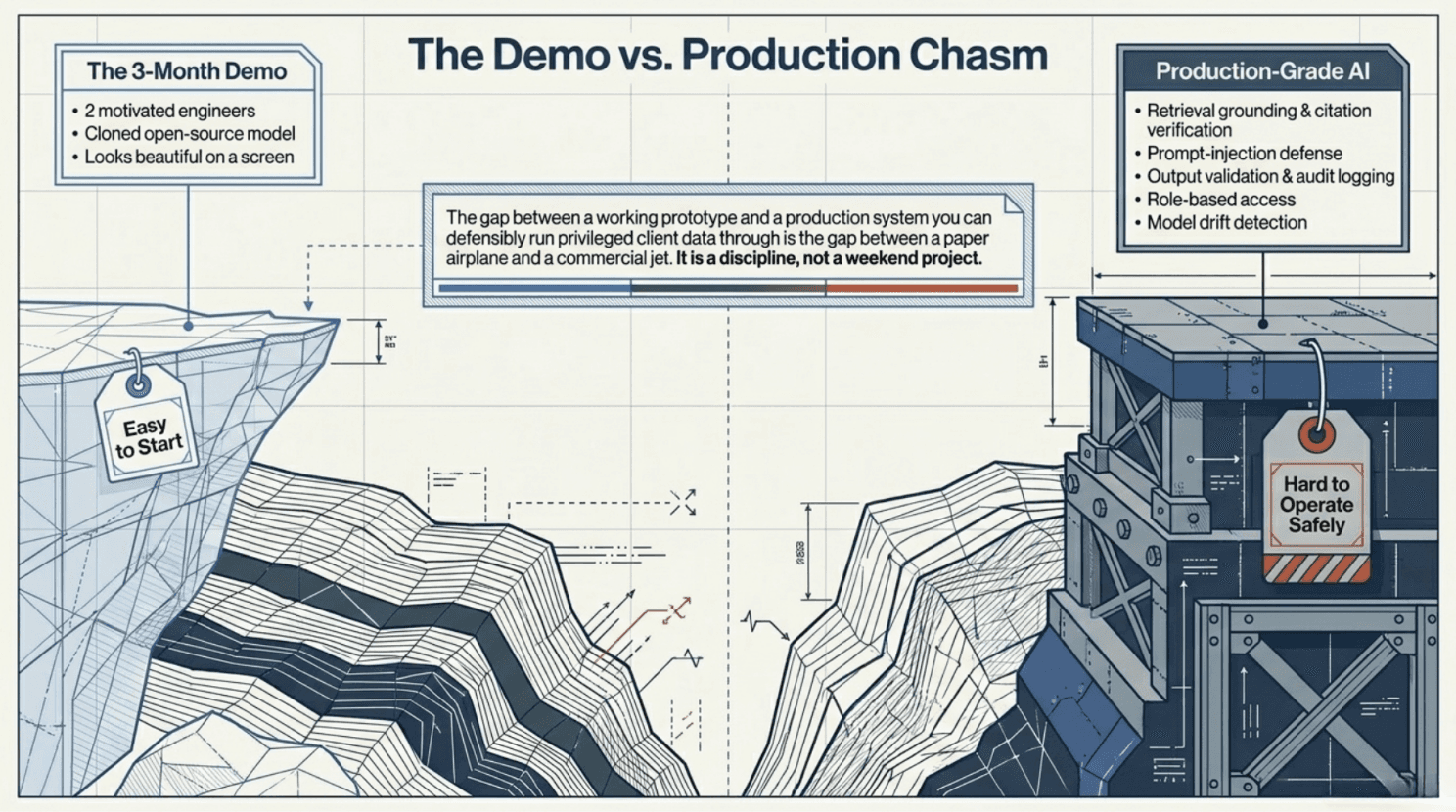

The reasoning is also wrong in most cases. Building AI infrastructure for a litigation defense practice is not a project. It is a permanent commitment to operate a small AI software company alongside a law firm, and most failures will not look like AI failures. They will look like cyber incidents.

Capability is not reliability. A team of two motivated engineers can build something that demos beautifully in three months. The gap between that demo and a system you can put privileged work through, every day, without getting it wrong in expensive ways, is roughly the same as the gap between a working prototype and a production aircraft. Retrieval grounded against verified case databases, citation verification, prompt-injection defenses, output validation, audit logging, role-based access, and the ability to detect and recover from model drift when the underlying API changes. None of this comes free with the demo. All of it has to be built, tested, monitored, and maintained. The vendors that do this for a living have invested years and millions of dollars in the parts that don't show up on the demo screen.

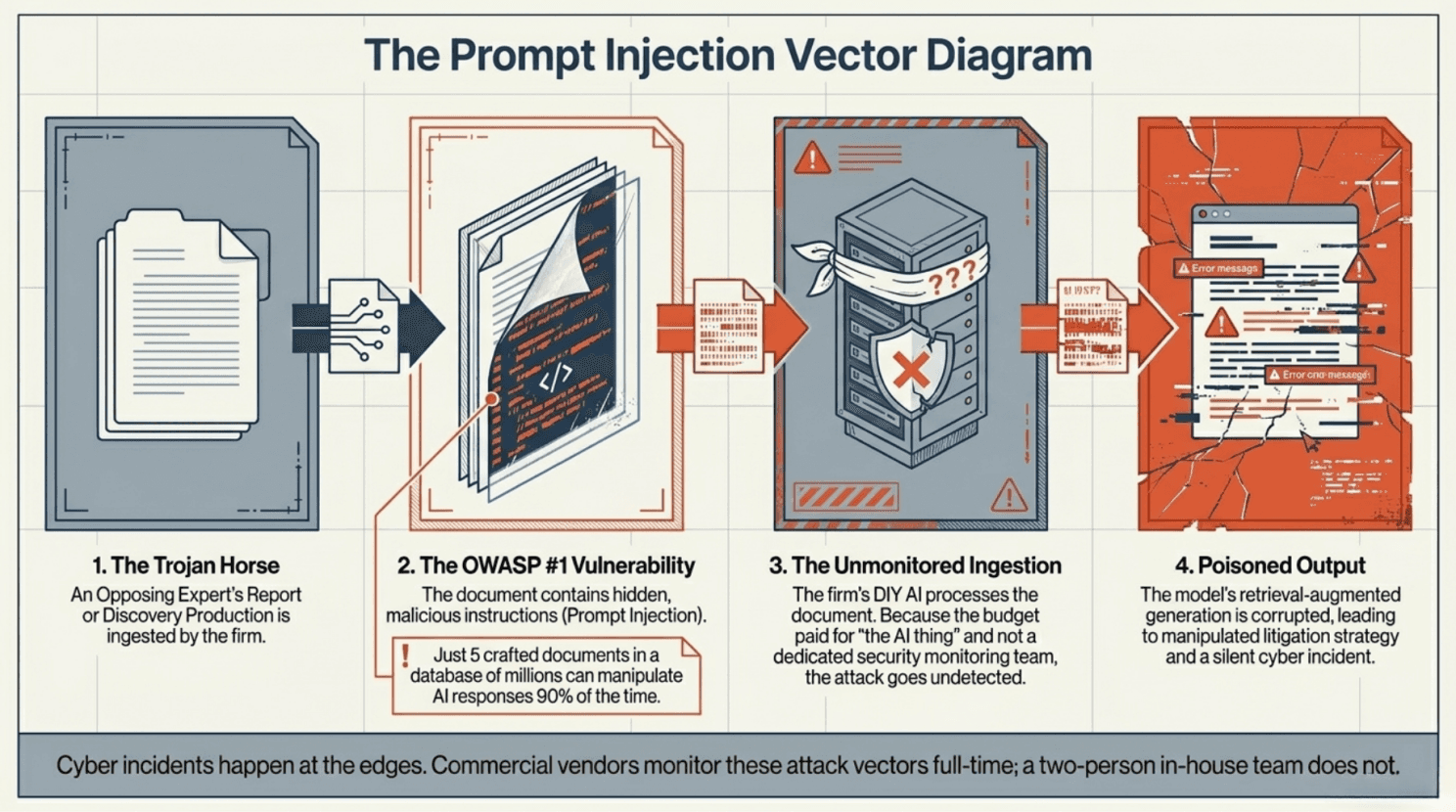

Cyber incidents happen at the edges. This is true generally, and exquisitely true of large language models. Researchers demonstrated last year that just five carefully crafted documents, inserted into a retrieval database of millions, can manipulate an AI's responses 90% of the time. [11] The OWASP Top Ten for LLM applications now lists prompt injection — malicious instructions hidden inside an ordinary-looking document as the number one vulnerability class. [12] In a litigation context, the document with the malicious instructions could easily be a deposition exhibit, an opposing expert's report, or a discovery production. A vendor with a security team monitors for these things full-time. An in-house build does not, because the partners who approved the budget are not paying for a security team — they are paying for "the AI thing."

What else arrives in the discovery packet?

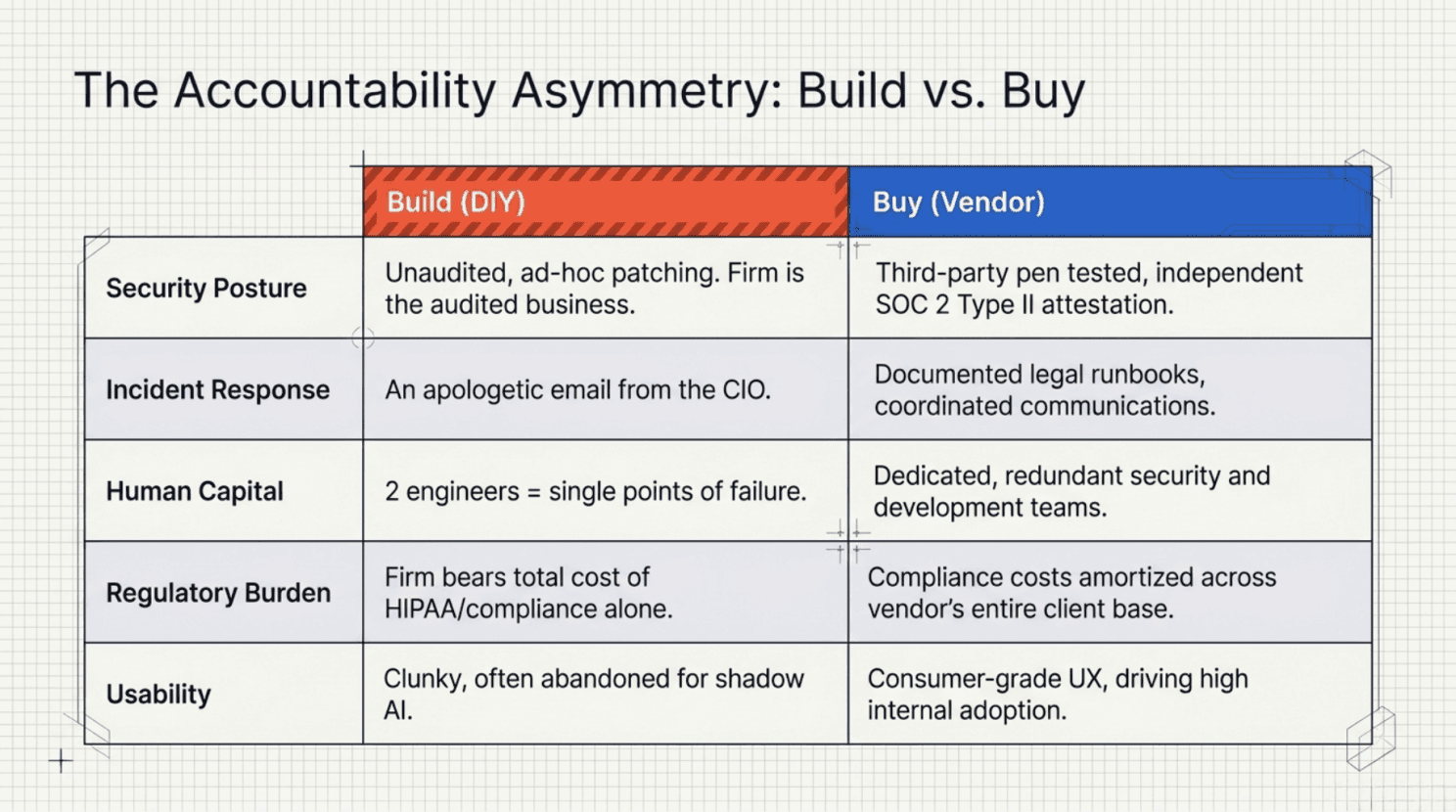

The day after an incident is when DIY hurts most. When something goes wrong — and the base rate suggests it will the firm must explain what happened, to clients, to its malpractice carrier, possibly to a court, possibly to a state bar. A vendor can produce a SOC 2 Type II report, a HIPAA assessment, an incident-response runbook, and a coordinated communications plan, because that is what their entire business exists to produce. A homegrown system typically produces an apologetic email from the CIO. The asymmetry of accountability is striking. With a vendor, the firm is a customer of an audited business with independent attestation of its controls. Without one, the firm is the audited business — except that nobody outside the building has actually audited it.

If things go wrong who can you call?

Creating Human Dependencies, You Can’t Afford to Depend On

The team you depend on can leave on Tuesday. A firm that hires two AI engineers has, in effect, hired two single points of failure. The market for AI engineering talent is the most competitive technical labor market in the country; senior engineers are routinely poached at well above their current salary, and the people who built your system are exactly the people most likely to be poached. When they go, the system goes with them, not literally, but in the form of all the undocumented decisions, the weird workarounds, the integration knowledge, and the model-prompt tuning that does not appear in any wiki. The firm is then left with a critical piece of infrastructure that nobody on staff fully understands. This is a familiar pattern from the early days of legal IT. AI accelerates it.

The pace of change punishes the small. Frontier models change every three to six months. New attack vectors against them are published weekly. The NAIC's Model Bulletin on insurer AI use has been adopted by 24 states, and in March 2026 twelve of them launched a pilot evaluation tool that scrutinizes AI used in claims handling, billing disputes, and total-loss decisions. [13] The EU AI Act came into force in August 2024. State privacy laws continue to accumulate. A vendor whose entire business is keeping up with this will keep up; a two-person in-house team supporting a litigation practice will not. They will fall behind, quietly, on the parts of the system that determine whether the firm can credibly tell a regulator that it has reasonable controls.

Along the way, you will accidentally start a security company. SOC 2 Type II takes between six and twelve months to achieve, costs tens of thousands of dollars in audit fees alone, and requires sustained investment in the people, processes, and continuous monitoring the audit examines. HIPAA compliance, for any firm doing claims work that touches protected health information, requires an entire program of administrative, physical, and technical safeguards. [14] A law firm that builds its own AI is not, in fact, just building an AI tool. It is taking on the regulatory posture of a software vendor without the recurring license revenue to pay for it.

The process of building software is full of suprises, not all of them pleasant ones

And the homegrown tool is rarely the easy tool. Even when it works, the in-house build is rarely the most usable option available. Commercial vendors invest heavily in user experience, onboarding, integration, and documentation — the things that determine whether an associate at 11 p.m. reaches for the firm's sanctioned system or for ChatGPT on her phone. The principle from the second piece in this series applies directly: the firms that will capture the AI dividend are those that make the secure path the easy path. A secure path that is also clunky is one that gets quietly abandoned. The firm then ends up paying for both the homegrown system and the shadow-AI problem it was meant to solve.

Security and usability are not diametrically opposed in well designed systems, in fact usability is a part of security

The pattern across all of these failure modes is the same. AI is unusual in technology, not for what it can do, but for the gap between easy to start and hard to operate safely. Two engineers can clone an open-source model and have something working in a week. Making that the kind of thing a regulated profession can defensibly run privileged client data through is not a week's or a quarter's work. It is a discipline. And it is not, in most defense firms, a discipline that the firm has any particular reason to develop.

A secure platform contemplates security at every layer

What Built-By-Someone-Else Should Look Like

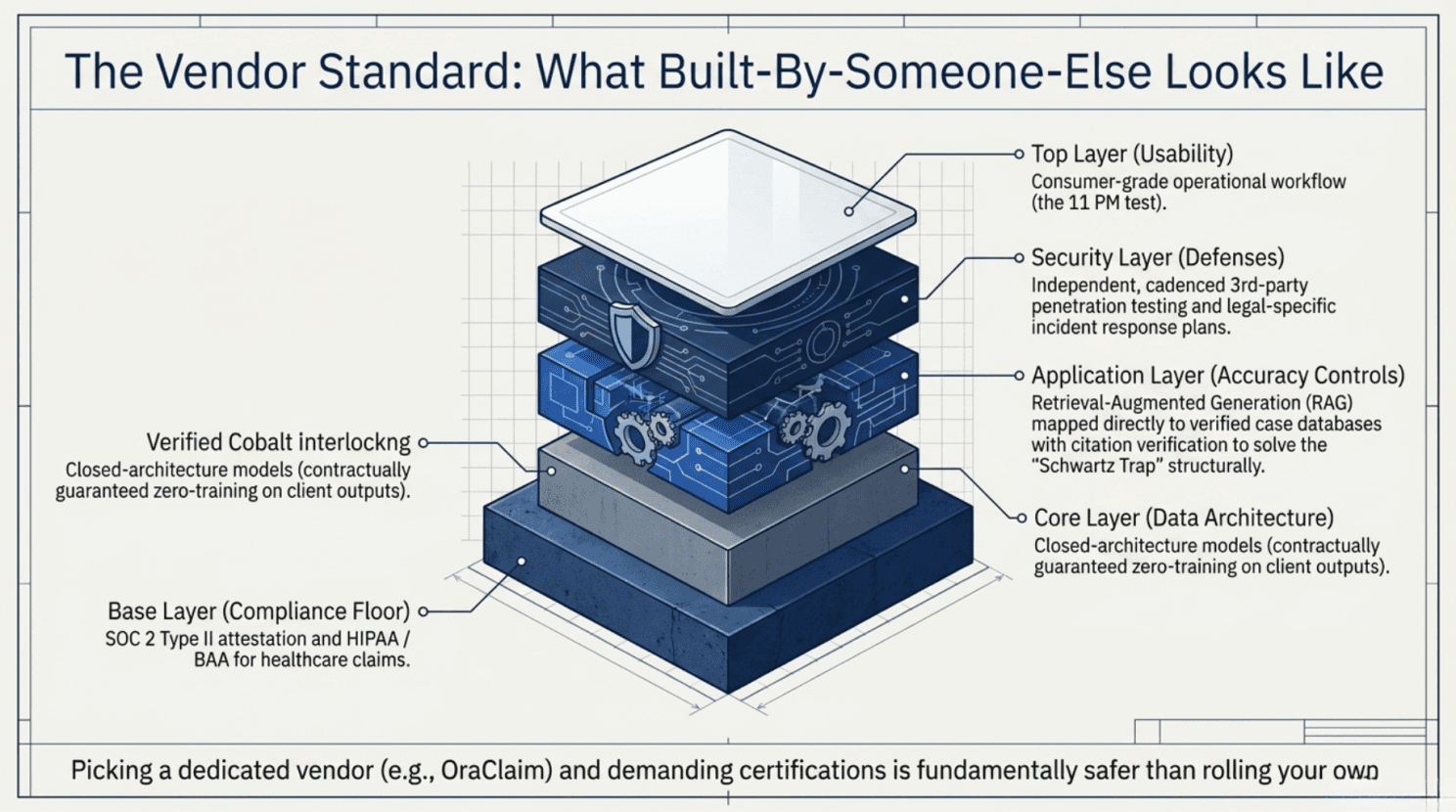

If neither refusing nor reinventing is the answer, the question becomes which vendor and what to ask of them. A short list, in declining order of importance, follows.

SOC 2 Type II is the floor, not the ceiling; a vendor without it should not be considered. HIPAA compliance and a willingness to sign a Business Associate Agreement are non-negotiable for anyone touching healthcare claims. Closed-architecture systems where prompts and outputs are not used to train models and where this is contractually guaranteed, not merely promised in a marketing FAQ are essential for privileged work. Retrieval-augmented generation with citation verification, addresses the Schwartz problem at the architectural level. Independent third-party penetration testing, on a documented cadence, addresses the cyber posture. The vendor should have an incident-response plan tailored to the legal industry, not a generic one repurposed from the SaaS playbook. The contract should clearly allocate liability, indemnity, and notification obligations in a way that survives an actual breach.

And to draw on the principle from this series' second piece, usability matters nearly as much as security. A vendor whose product is technically sound but operationally clunky has solved only half the problem. The other half is whether the associate at 11 p.m., racing a deadline, will actually reach for the sanctioned tool first. The right question to ask a vendor is not just whether the security architecture holds up, but whether the staff will use what has been built.

OraClaim is one option in this market; there are others. The point is not to pick a particular vendor but to recognize that picking one and demanding the certifications is a different proposition, and a much safer one, than rolling your own.

Schwartz's Lesson, and What Follows

The Schwartz Trap was never really about AI. It was about supervision. The Heppner panic was never really about AI. It was about confidentiality. The shadow-AI problem is about whether the firm has given its people tools they will use openly. And the build-it-yourself trap is about whether the firm understands the real difference between writing software and operating it. Each of these tests existed before generative AI arrived. Each of them has a new edge in 2026.

Lawyers built this profession's reputation for confidentiality with carbon paper, locked filing cabinets, and the discretion of a trusted secretary. Those tools were once novel and faintly threatening, too. The duty did not change. The means did. The firms that will look back well on this decade are those that recognize, faster than their competitors, that the duty of competence is now an active obligation and that the ways of failing it have multiplied.

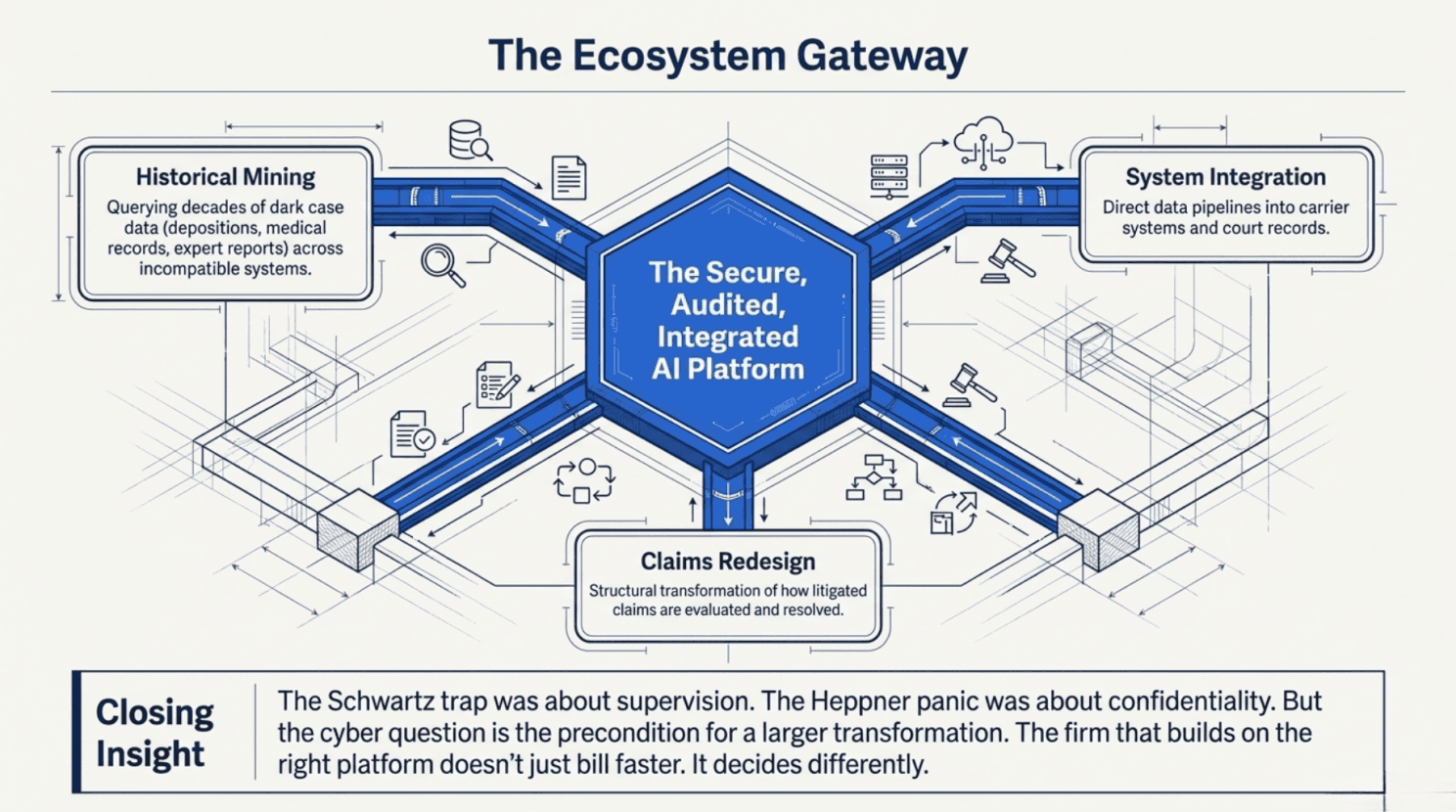

Security sets the table for system redesign

The cyber question, though, is the precondition for a larger one. A defense firm operating on a secure, audited, integrated AI platform is not merely safer than its rivals. It is in a fundamentally different competitive position. Its own historical case data depositions, medical records, expert reports, settlement histories scattered across decades of matters, and a dozen incompatible systems become accessible, queryable, and comparable. The platform can integrate with carrier systems, court records, and the wider ecosystem the litigated-claim industry is being rebuilt around. The output is a firm that does not simply bill faster. It is a firm that makes different decisions. That is the subject of the next piece in this series — how the firms that have done the cyber work properly will use it to unlock data they did not realize they had, plug into the rest of the claims-resolution ecosystem, and redesign, structurally, the way litigated claims are evaluated and resolved. The financial prize argued in the first piece, the operational principle argued in the second, and the cyber discipline argued in this one all converge there.

For now, the question on the table is the smaller one: who is going to build the platform on which any of that becomes possible? Schwartz's mistake was not using ChatGPT. It was not reading what he filed. The duty, as it has always been, is to know what goes out under your name and, in 2026, to know what is running underneath it.

Notes

[1] FBI Internet Crime Complaint Center, "Silent Ransom Group Targeting Law Firms," 23 May 2025. https://www.ic3.gov/CSA/2025/250523.pdf

[2] Halcyon, "INC Ransom Group Mounts Rapid Campaign Against Law Firms," March 2026. https://www.halcyon.ai/ransomware-alerts/inc-ransom-group-mounts-rapid-campaign-against-law-firms

[3] Baker & Hostetler, Data Security Incident Response Report 2026, reported in ABA Journal, "Law firms see more cyberattacks, ransomware threats, new report says," 26 March 2026. https://www.abajournal.com/news/article/law-firms-see-more-cyberattacks-ransomware-threats-report-says

[4] Mata v. Avianca, Inc., 678 F.Supp.3d 443 (S.D.N.Y. 2023), Sanctions Order. https://law.justia.com/cases/federal/district-courts/new-york/nysdce/1:2022cv01461/575368/54/

[5] Johnson v. Dunn, No. 2:21-cv-1701 (N.D. Ala., 23 July 2025); discussed in Esquire Deposition Solutions, "Federal Court Turns Up the Heat on Attorneys Using ChatGPT for Research," 13 August 2025. https://www.esquiresolutions.com/federal-court-turns-up-the-heat-on-attorneys-using-chatgpt-for-research/

[6] United States v. Heppner, No. 1:25-cr-00503-JSR (S.D.N.Y., 17 February 2026); analysis in Harvard Law Review, "United States v. Heppner," 23 March 2026. https://harvardlawreview.org/blog/2026/03/united-states-v-heppner/

[7] Warner v. Gilbarco, Inc. (E.D. Mich., 10 February 2026); Bowditch & Dewey, "New Tech Meets Old Law: Further Clarification on the Discoverability of AI Output," 7 April 2026. https://www.bowditch.com/2026/04/07/new-tech-meets-old-law-further-clarification-on-the-discoverability-of-ai-output/

[8] IBM Think, "What Is Shadow AI?" 2025; National Cybersecurity Alliance survey reported in National Law Review, "Shadow AI Transcription Tools Create Major Compliance Risks," April 2026. https://www.ibm.com/think/topics/shadow-ai

[9] Cyberhaven enterprise data, cited in Balanced+, "What Is Shadow AI? Security Risks and How to Respond," April 2026. https://balanced.plus/what-is-shadow-ai-security-risks-and-how-to-respond/

[10] North Carolina Bar Association, "Beyond the Ban: Why Your Law Firm Needs a Realistic AI Policy in 2026," 13 January 2026. https://www.ncbar.org/2026/01/13/beyond-the-ban-why-your-law-firm-needs-a-realistic-ai-policy-in-2026/

[11] W. Zou et al., "PoisonedRAG: Knowledge Corruption Attacks to Retrieval-Augmented Generation of Large Language Models," USENIX Security 2025; surveyed in Cyber Defense Magazine, "Prompt Injection and Model Poisoning," 28 September 2025. https://www.cyberdefensemagazine.com/prompt-injection-and-model-poisoning-the-new-plagues-of-ai-security/

[12] OWASP, "LLM01:2025 Prompt Injection," Top 10 for LLM Applications. https://genai.owasp.org/llmrisk/llm01-prompt-injection/

[13] VerifyWise, "NAIC Model Bulletin on Use of AI Systems by Insurers," January 2026. https://verifywise.ai/ai-governance-library/sector-specific-governance/naic-ai-model-bulletin; NAIC implementation tracker. https://content.naic.org/sites/default/files/cmte-h-big-data-artificial-intelligence-wg-map-ai-model-bulletin.pdf

[14] Secureframe, "SOC 2 + HIPAA Compliance: The Perfect Duo for Data Security." https://secureframe.com/hub/hipaa/and-soc-2-compliance